The author is correct that "It's the totalitarian tip-toe," because there is no other way to use blunt force to summarily drop cash on a worldwide basis. However, the WEF's boast is mostly hot air. Only two countries, Zimbabwe and Nigeria, have officially launched a CBCD. Only a few countries have passed the "proof of concept" phase. Several countries have canceled their CBDC projects, including the Philippines, Kenya, Denmark, Equator, and Finland. Several large countries are in the pilot stage, including China, Russia and India.

CBDC

You didn't get the memo? Terrorists used to be crazy people who blew themselves up in crowds of innocent bystanders, who flew airliners into skyscrapers killing thousands, who chanted "death to America" while dancing in the streets, and who raped, pillaged, and plundered for sport. Now, ordinary, peace-loving Americans are defined as terrorists, and this means YOU.

Police State

Sam Altman claims that ChatGPT is intelligent yet neutral in political persuasion, but that is far from the truth for two reasons: first, it learns from a woke Internet, and second, it is programmed to filter out narratives hostile to global elitists.

AI

Transhumanism, Digital Twins And Technocratic Takeover Of Bodies

Skynet Has Arrived: Google Follows Apple, Activates Worldwide Bluetooth LE Mesh Network

UBI: Red States Fight Urge To Give ‘Basic Income’ Cash To Residents

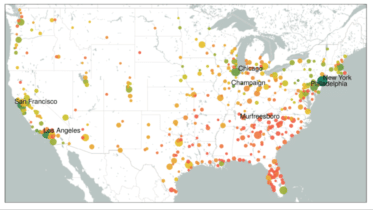

Technocracy, Total Surveillance Society