|

Getting your Trinity Audio player ready... |

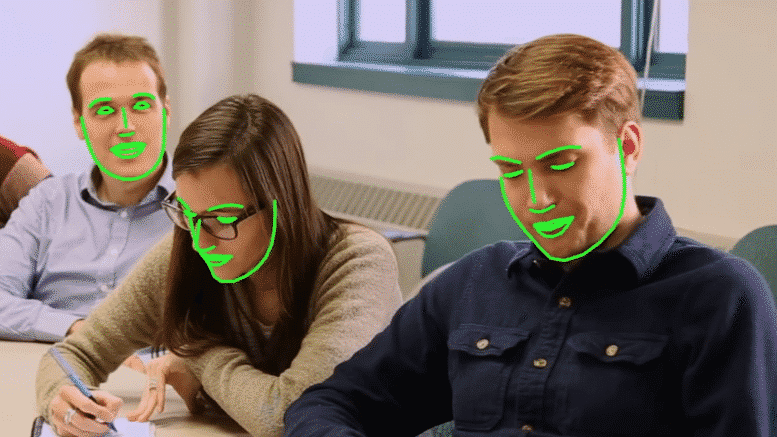

Download this post... Chances are, you’re already familiar with facial recognition software, even if you’ve never spent time in an artificial intelligence lab. The algorithm that Facebook uses for tagging photos, for example, is a version of facial recognition software that can identify faces with a 97.25 percent accuracy. The problem with most of today’s facial...