|

Getting your Trinity Audio player ready... |

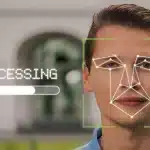

Whatever IBM's motive over dumping its facial recognition business, it makes a bold statement to the entire industry, including Amazon and Google, that this technology has no place in law enforcement in the first place.