|

Getting your Trinity Audio player ready... |

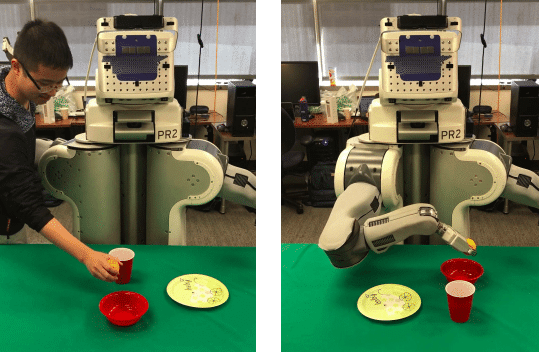

New AI can mimic human movement after seeing it just one time, which is how humans learn from infancy. Of course, there is no measure of intent as to why the human movement was performed in the first place. This is typical Technocrat thinking that 'why' is not as important as 'what'.