|

Getting your Trinity Audio player ready... |

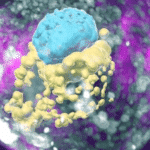

Google's Advanced Technology and Products division is a little bit like the Pentagon's DARPA as it creates oddball products to examine everything in the human environment in order to anticipate what your next move or intention might be.