|

Getting your Trinity Audio player ready... |

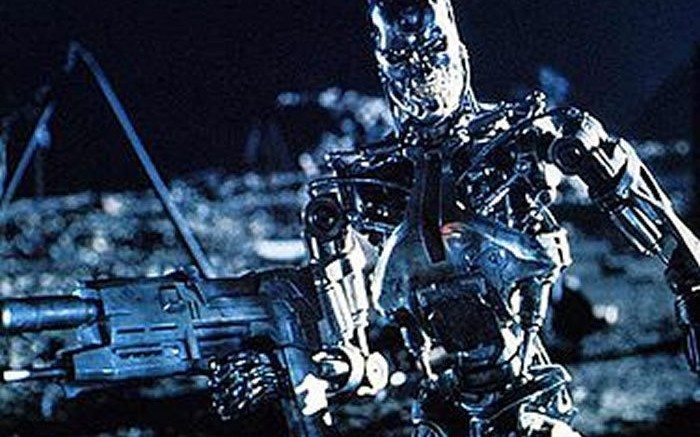

Download this post... Free-thinking AI robots could end up destroying mankind or even completely change what it means to be human if we let them think for themselves, a scientist has warned. Dr Amnon Eden said more needs to be done to look at the risks of continuing towards an AI world. He warned that...