|

Getting your Trinity Audio player ready... |

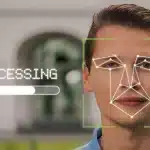

Always claiming the centrist, balanced thought leader position, the WEF "offers a framework to ensure the responsible use of facial recognition technology." There is little concern over personal privacy, but only if it is accurate enough and will minimize wrongful arrests. Focus is on police actions to contain and predict criminal activity, thus promoting a police state.